Two of the great advents of the past few years that have enabled easily repeatable development environments and experiments are Docker and Jupyter. Let's look at how we can use Docker Compose to rapidly deploy a Jupyter environment with a Postgres database.

Before moving forward, make sure you have Docker and Docker Compose installed. Installing Docker and Docker Compose are out of the scope of this tutorial, but notes can be found on the Docker website.

Once Docker and Docker Compose are up and running, let's define the file structure of our project. Start by creating a couple of directories in your current directory: jupyter, notebooks, and data. Within the jupyter directory, create a file Dockerfile, and then in your current directory, create a file docker-compose.yml.

$ mkdir ./jupyter ./data ./notebooks

$ touch ./jupyter/Dockerfile

$ touch ./docker-compose.ymlAt this point, your working directory should have the following file structure:

.

├── data

├── docker-compose.yml

├── jupyter

│ └── Dockerfile

├── notebooks

└── treeLet's add some contents to our Dockerfile. Fortunately, the Jupyter project provides a series of Dockerfiles with various configurations that we can start from. For this project, we will base our own Dockerfile on the datascience-notebook.

Add the following contents to ./jupyter/Dockerfile:

FROM jupyter/datascience-notebook

RUN python --version

RUN conda install --quiet --yes -c \

conda-forge osmnx dask

RUN pip install -U geopandas \

geopy \

rtree \

folium \

shapely \

fiona \

six \

pyproj \

numexpr==2.6.4 \

elasticsearch \

geojson \

plotly \

tqdm \

mapboxgl \

cufflinks \

geohash2 \

tables \

mixpanel \

GeoAlchemy2

VOLUME /notebooks

WORKDIR /notebooksWhile the bulk of what's needed is provided by the jupyter/datascience-notebook base image, I find it's useful to define our own Dockerfile to include dependencies that may not be included in the base image. In this case, we're installing a handful of dependencies that are useful for geospatial data processing such as geopandas and geopy.

Now that we have our Dockerfile defined, let's add some content to ./docker-compose.yml that will help us get Jupyter and Postgres kicked off.

Add the following content to ./docker-compose.yml:

version: "3"

services:

jupyter:

build:

context: ./jupyter

ports:

- "8888:8888"

links:

- postgres

volumes:

- "./notebooks:/notebooks"

- "./data:/data"

postgres:

image: postgres

restart: always

environment:

POSTGRES_USER: data

POSTGRES_PASSWORD: data

POSTGRES_DB: dataThis file defines two services: jupyter and postgres. The jupyter service will be built from the Dockerfile defined above, and the postgres service will be built from Dockerhub's library/postgres. The postgres service will be accessible from the jupyter service at the hostname postgres, and to keep things easy, the database name, username, and password for the postgres service are all set to "data". Finally, we mount two working directories created above, ./notebooks and ./data to the jupyter service at /notebooks and /data respectively.

If all the above went well, all that should be left to get things spinning is running the following in our working directory:

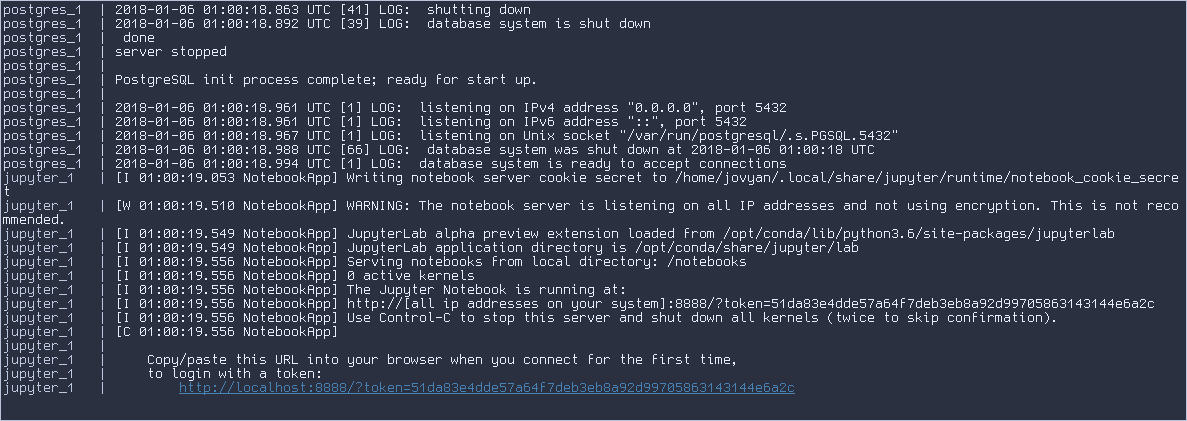

$ docker-compose upThis should result in the jupyter container being built, and then both the jupyter and postgres services being started. You should see something like this in your console:

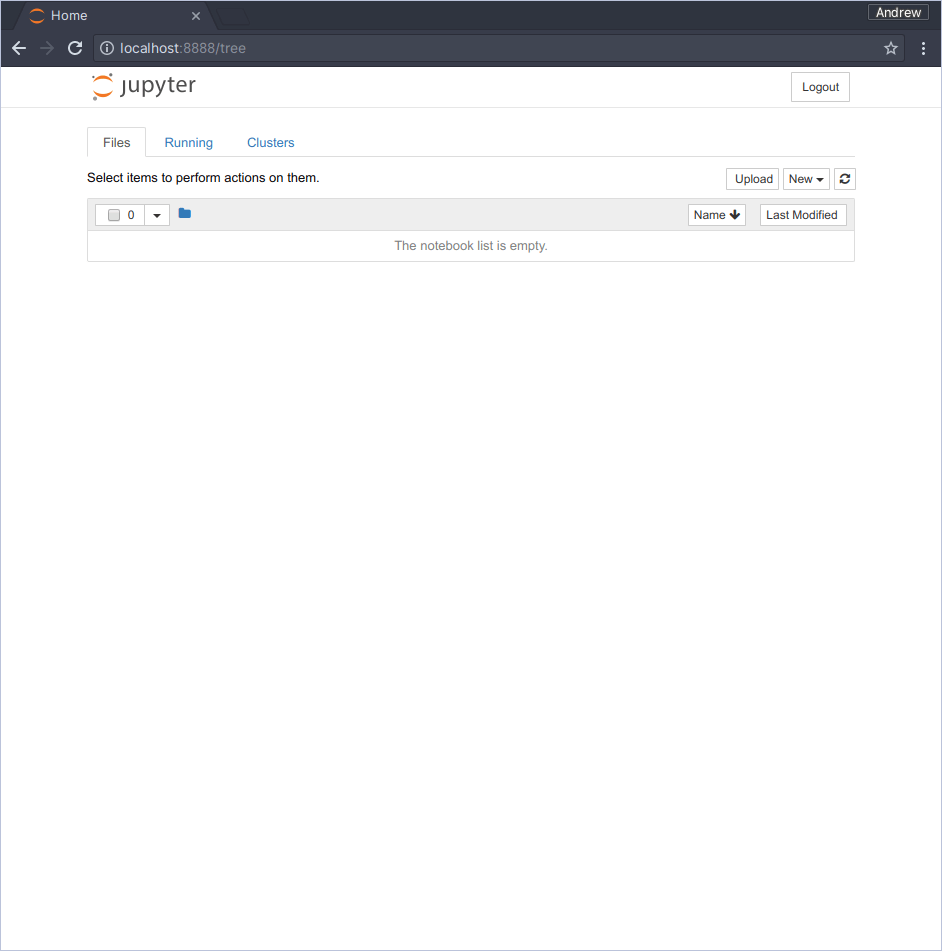

The first time we access the Jupyter web interface, we need to use the link provided in the console, subsequently we can directly visit http://localhost:8888. Click the link in the console - a browser should open to the following view:

From here, you can create a new notebook - the .ipynb file will live in the host machine's filesystem at ./notebooks. You can use the ./data directory to store any data that you might want to access from your notebook.

All code described above can be found on Github.

I hope this was helpful! If you have any questions, go ahead and open an issue.